|

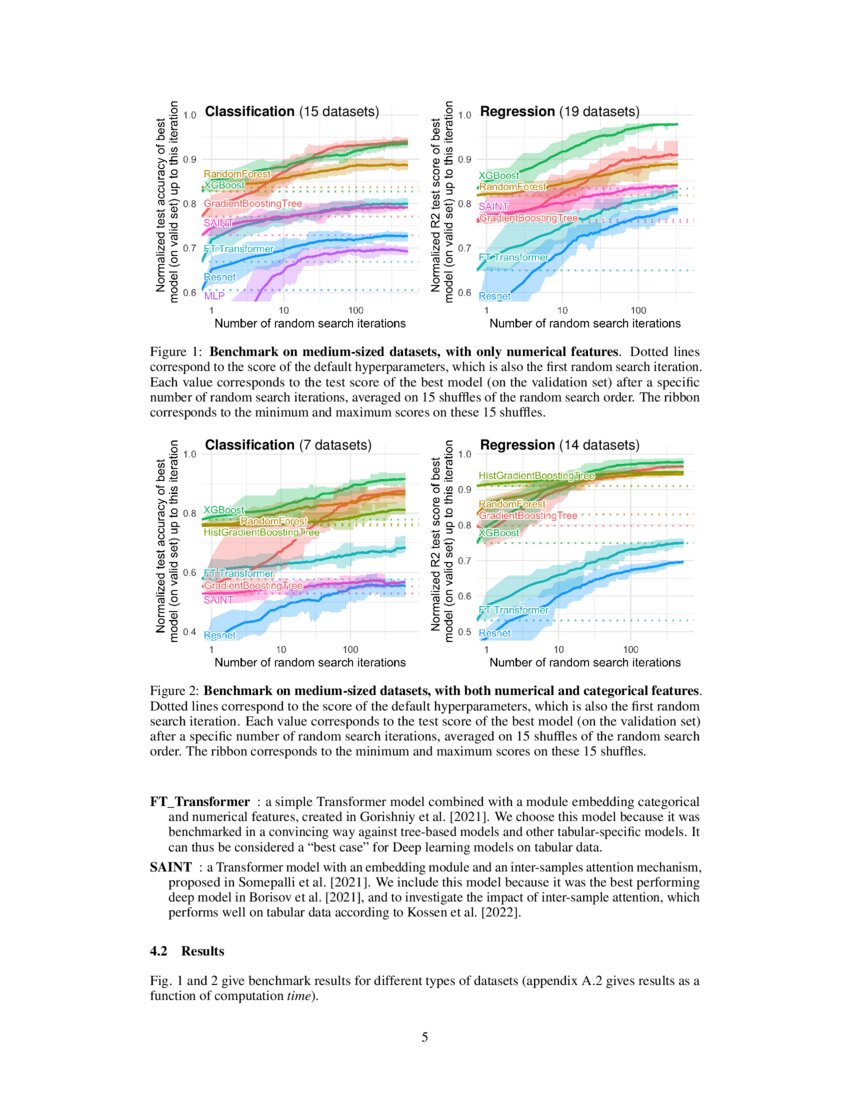

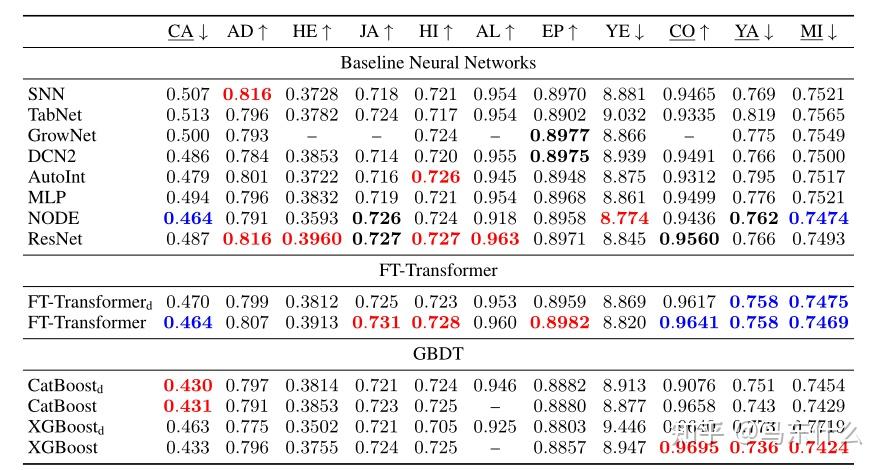

We also compare the best DL models with Gradient Boosted Decision Trees and conclude that there is still no universally superior solution. In particular, is intended to facilitate the combination of text and images with corresponding tabular data using wide and deep models. We carefully tune and evaluate them on a wide range of datasets and reveal two significant findings. Both models are compared to many existing architectures on a diverse set of tasks under the same training and tuning protocols. In this work, we start from a thorough review of the main families of DL models recently developed for tabular data. The second model is our simple adaptation of the Transformer architecture for tabular data, which outperforms other solutions on most tasks. The first one is a ResNet-like architecture which turns out to be a strong baseline that is often missing in prior works. Additionally, the field still lacks effective baselines, that is, the easy-to-use models that provide competitive performance across different problems.In this work, we perform an overview of the main families of DL architectures for tabular data and raise the bar of baselines in tabular DL by identifying two simple and powerful deep architectures. As a result, it is unclear for both researchers and practitioners what models perform best. However, the proposed models are usually not properly compared to each other and existing works often use different benchmarks and experiment protocols. The existing literature on deep learning for tabular data proposes a wide range of novel architectures and reports competitive results on various datasets.

Yury Gorishniy, Ivan Rubachev, Valentin Khrulkov, Artem Babenko Abstract We also compare the best DL models with Gradient Boosted Decision Trees and conclude that there is still no universally superior solution.Bibtex Paper Reviews And Public Comment » Supplemental Both models are compared to many existing architectures on a diverse set of tasks under the same training and tuning protocols. In this months PRG, Sanyam will cover the Neurips 2021 paper 'Revisiting Deep Learning Models for Tabular Data' & will look at DL models and if we can effec. We thoroughly evaluate the main models for tabular DL on a diverse set of tasks to investigate their relative performance. In this work, we perform an overview of the main families of DL architectures for tabular data and raise the bar of baselines in tabular DL by identifying two simple and powerful deep architectures. We summarize the contributions of our paper as follows: 1. Additionally, the field still lacks effective baselines, that is, the easy-to-use models that provide competitive performance across different problems.

Unlike GBDT, deep models can additionally benefit from pretraining, which is a workhorse of DL for vision and NLP. However, the proposed models are usually not properly compared to each other and existing works often use different benchmarks and experiment protocols. Abstract: Recent deep learning models for tabular data currently compete with the traditional ML models based on decision trees (GBDT). Available Models Neural Oblivious Decision Ensembles for Deep Learning on Tabular Data TabNet: Attentive Interpretable Tabular Learning Mixture Density.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed